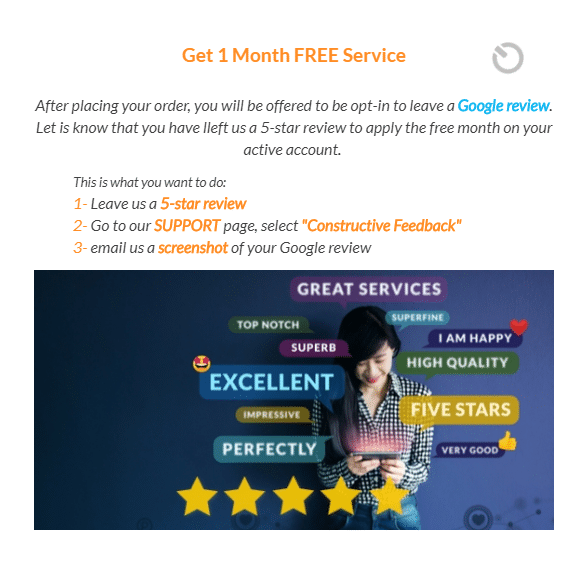

I recently purchased a cheap service plan I wanted to trial from SpeedTalk Mobile. During the checkout process, I was shown the following:

This clearly violates Google’s guidelines.1

I also noticed SpeedTalk keyword stuffing in its website’s footer—another questionable strategy:2

I was pretty excited about SpeedTalk. Not anymore.

Alternatives to SpeedTalk

If you’re looking for an alternative low-cost carrier, take a look at my review of Mint Mobile or my larger list of recommended budget-friendly carriers.