I’m not a fan.

TopTenReviews ranks products and services in a huge number of industries. Stock trading platforms, home appliances, audio editing software, and hot tubs are all covered.

TopTenReviews’ parent company, Purch, describes TopTenReviews as a service that offers, “Expert reviews and comparisons.”1

Many of TopTenReviews’ evaluations open with lines like this:

I’ve seen numbers between 40 and 80 hours in a handful of articles. It takes a hell of a lot more time to understand an industry at an expert level.

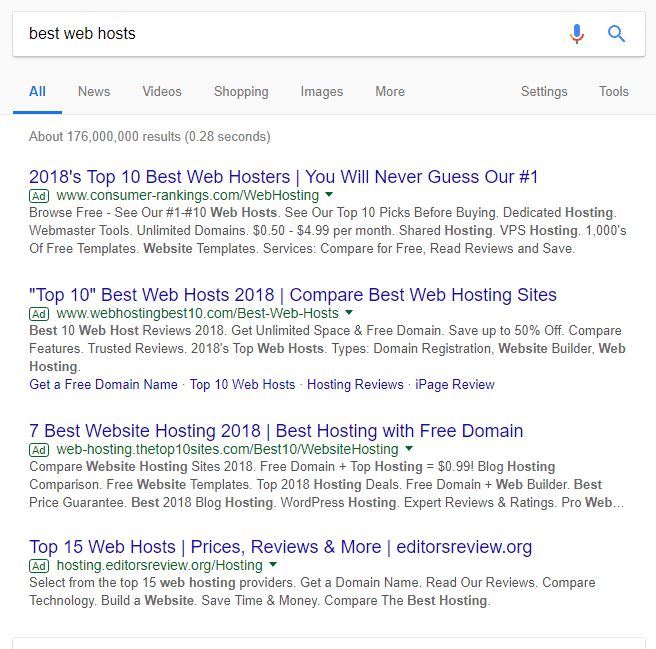

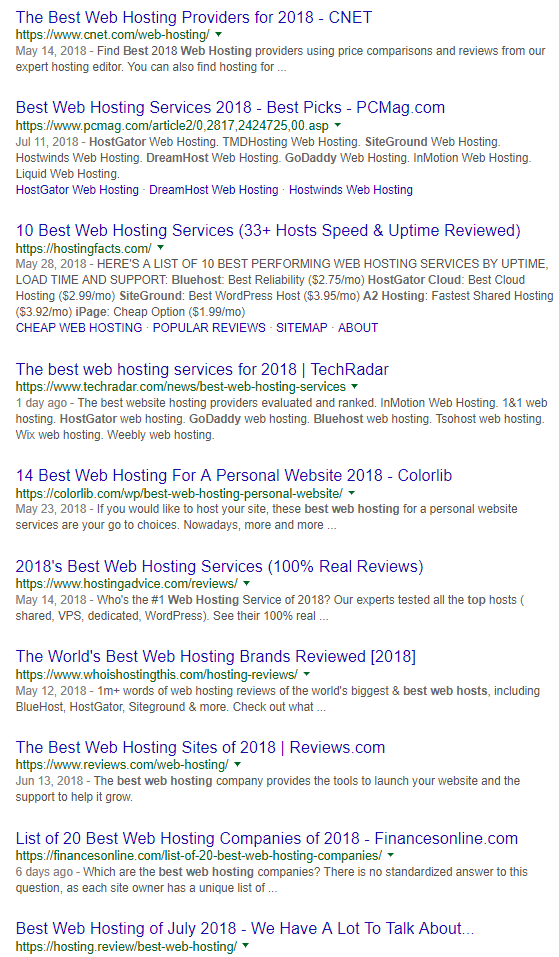

I’m unimpressed by TopTenReviews’ rankings in industries I’m knowledgable about. This is especially frustrating since TopTenReviews often ranks well in Google.

A particularly bad example: indoor bike trainers. These devices can turn regular bikes into stationary bikes that can be ridden indoors.

I love biking and used to ride indoor trainers a fair amount. I’m suspicious the editor who came up with the trainer rankings at TopTenReviews couldn’t say the same.

The following paragraph is found under the heading “How we tested on the page for bike trainers”:

There’s no mention of using physical products.

The top overall trainer is the Kinetic Road Machine. It’s expensive but probably a good recommendation. I know lots of people with either that model or similar models who really like their trainers.

However, I don’t trust TopTenReviews’ credibility. TopTenReviews has a list of pros and cons for the Kinetic Road Machine. One con is: “Not designed to handle 700c wheels.” It is.

It’s a big error. 700c is an incredibly common wheel size for road bikes. I’d bet the majority of people using trainers have 700c wheels.4 If the trainer wasn’t compatible with 700c wheels, it wouldn’t deserve the “best overall” designation.

TopTenReviews even states, “The trainer’s frame fits 22-inch to 29-inch bike wheels.” 700c wheels fall within that range. A bike expert would know that.

TopTenReviews’ website has concerning statements about its approach and methodology. An excerpt from their about page (emphasis mine):

Maybe TopTenReviews came up with an awesome algorithm no one else has thought of. I find it much more plausible that—if a single algorithm exists—the algorithm is private because it’s silly and easy to find flaws in.

TopTenReviews receives compensation from many of the companies it recommends. While this is a serious conflict of interest, it doesn’t mean all of TopTenReviews’ work is bullshit. However, I see this line on the about page as a red flag:

Avoiding bias is difficult. Totally eliminating it is almost always unrealistic.

Employees doing evaluations will sometimes have a sense of how lucrative it will be for certain products to receive top recommendations. These employees would probably be correct to bet that they’ll sometimes be indirectly rewarded for creating content that’s good for the company’s bottom line.

Even if the company is being careful, bias can creep up insidiously. Someone has to decide what the company’s priorities will be. Even if reviewers don’t do anything dishonest, the company strategy will probably entail doing evaluations in industries where high-paying affiliate programs are common.

Reviews will need occasional updates. Won’t updates in industries where the updates could shift high-commission products to higher rankings take priority?

TopTenReviews has a page on foam mattresses that can be ordered online. I’ve bought two extremely cheap Zinus mattresses on Amazon.7 I’ve recommended these mattresses to a bunch of people. They’re super popular on Amazon.8 TopTenReviews doesn’t list Zinus.9

Perhaps it’s because other companies offer huge commissions.10 I recommend The War To Sell You A Mattress Is An Internet Nightmare for more about how commissions shadily distort mattress reviews. It’s a phenomenal article.

R-Tools Technology Inc. has a great article discussing their software’s position in TopTenReviews’ rankings, misleading information communicated by TopTenReviews, and conflicts of interest.

The article suggests that TopTenReviews may have declined in quality over the years:

Purch has quite a different business model than TopTenReviews did when it first started. Purch, which boasted revenues of $100 million in 2014, has been steadily acquiring numerous review sites over the years, including TopTenReviews, Tom’s Guide, Tom’s Hardware, Laptop magazine, HowtoGeek, MobileNations, Anandtech, WonderHowTo and many, many more.11

I don’t think I would have loved the pre-2013 website, but I think I’d have more respect for it than today’s version of TopTenReviews.

I’m not surprised TopTenReviews can’t cover hundreds of product types and consistently provide good information. I wish Google didn’t let it rank so well.