Traditionally, companies crowdsource data on cell network performance by sneaking code into unrelated apps.

A company following the traditional model might pay the developer of a mobile game or a note-taking app to wedge information-gathering code into their apps. Generally, app users don’t realize what’s going on.

Coverage Critic is working on a new model for crowdsourcing data. In 2023, Coverage Critic partnered with DIMO, a project that’s trying to change how automotive data is collected and used. Members of the DIMO community opt to share data from their vehicles and get rewarded for doing so.

Vehicles connected to the DIMO Network have collectively driven hundreds of millions of miles while building a colossal data set measuring the performance of the major US networks.

Roam Network

Today, Coverage Critic is announcing a partnership with Roam Network. Roam’s purpose-built Android app allows users to collect data about their network operator using an Android device of their choice. Like DIMO, Roam rewards users for their contributions.

Complementary Data

DIMO and Roam’s datasets are excellent complements to one another. DIMO’s data comes from a narrow range of devices and all the data is captured in an in-vehicle context. When building coverage maps, the limited context is helpful. Differences in observed signal strength tend to indicate genuine differences in network quality. Making sense of the data doesn’t require muddling through other discrepancies.1

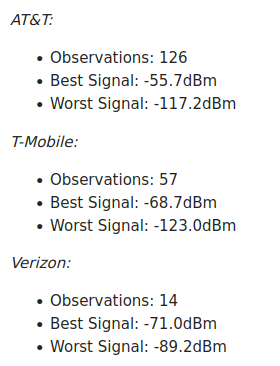

While a constrained scope of data collection often allows for crisper inferences to be drawn, a constrained scope can sometimes mean missing something about the real-world performance of cell networks. That’s where Roam Network comes in. Members of the Roam community run a wide range of Android devices. Data is gathered across diverse conditions: indoors, outdoors, stationary, and in motion. The big networks, like AT&T, Verizon, and T-Mobile, all get accounted for. But Roam also captures data from smaller networks such as GCI in Alaska.

Explore The Data

Now, Coverage Critic gets the best of both worlds. Coverage Critic can choose to rely on Roam data, DIMO data, or both, depending on the situation.

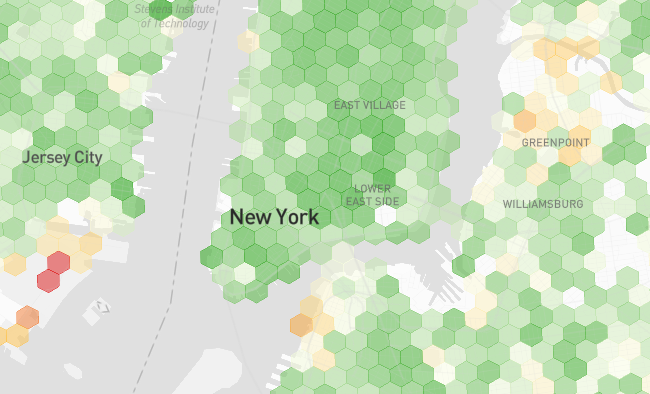

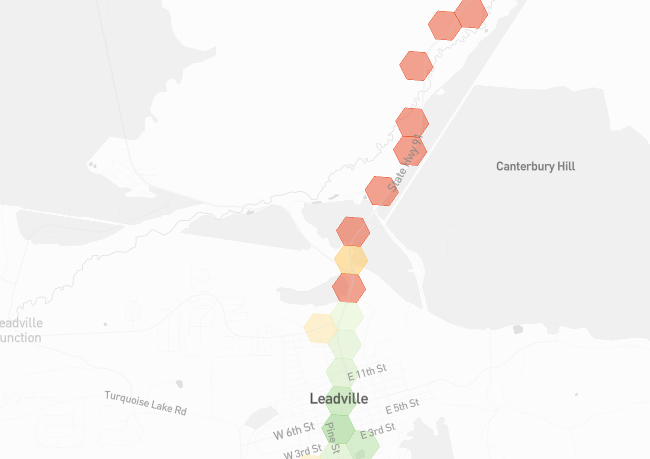

A coverage map relying on Roam data is live on Broadband Map.

Soon, data from the FCC, DIMO, and Roam will be combined into a unified map.